University of Illinois at Urbana Champaign (UIUC)

RoboSoft + RA-L 2022

Abstract

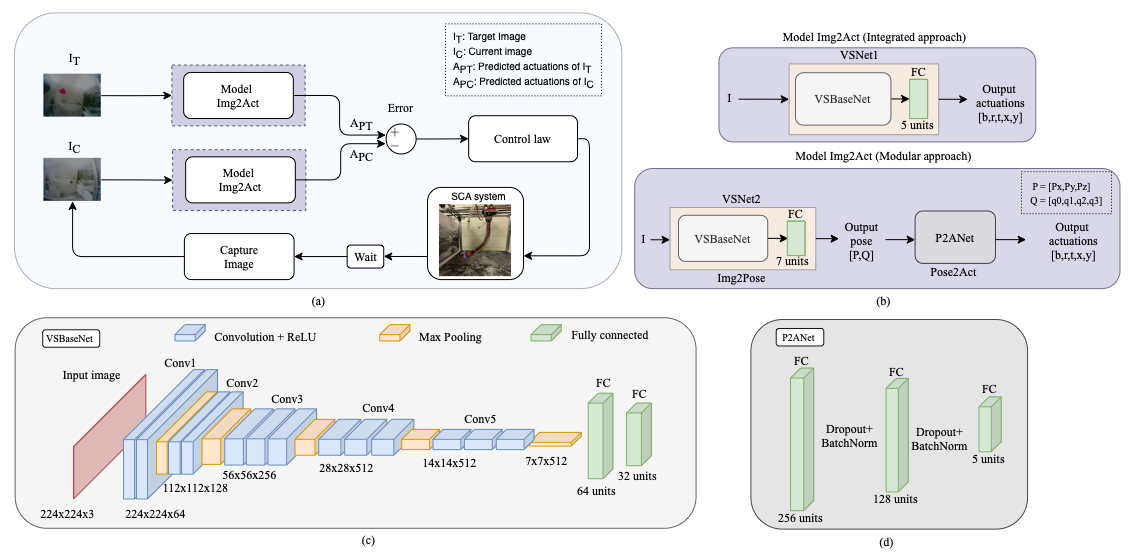

For soft continuum arms, visual servoing is a popular control strategy that relies on visual feedback to close the control loop. However, robust visual servoing is challenging as it requires reliable feature extraction from the image, accurate control models and sensors to perceive the shape of the arm, both of which can be hard to implement in a soft robot. This letter circumvents these challenges by presenting a deep neural network-based method to perform smooth and robust 3D positioning tasks on a soft arm by visual servoing using a camera mounted at the distal end of the arm. A convolutional neural network is trained to predict the actuations required to achieve the desired pose in a structured environment. Integrated and modular approaches for estimating the actuations from the image are proposed and are experimentally compared. A proportional control law is implemented to reduce the error between the desired and current image as seen by the camera. The model together with the proportional feedback control makes the described approach robust to several variations such as new targets, lighting, loads, and diminution of the soft arm. Furthermore, the model lends itself to be transferred to a new environment with minimal effort.

Qualitative Results of Various Experiments

Citation

@ARTICLE{9726901,

author={Kamtikar, Shivani Kiran and Marri, Samhita and Walt, Benjamin Thomas and Uppalapati, Naveen Kumar and Krishnan, Girish and Chowdhary, Girish},

journal={IEEE Robotics and Automation Letters},

title={Visual Servoing for Pose Control of Soft Continuum Arm in a Structured Environment},

year={2022},

volume={},

number={},

pages={1-1},

doi={10.1109/LRA.2022.3155821}}

Acknowledgement

This work is funded in part by AIFARMS National AI institute in agriculture supported by Agriculture and Food Research Initiative (AFRI) grant no. 2020-67021-32799/project accession no.1024178 from the USDA National Institute of Food and Agriculture, by USDA-NSF NRI grant USDA 2019-67021-28989, NSF 1830343, and by joint NSF-USDA COALESCE grant, USDA 2021-67021-34418.